Guaranteed Expert Consultation Within 1 Hour. Click Here!

Guaranteed Expert Consultation Within 1 Hour. Click Here!

Guaranteed Expert Consultation Within 1 Hour. Click Here!

Guaranteed Expert Consultation Within 1 Hour. Click Here!

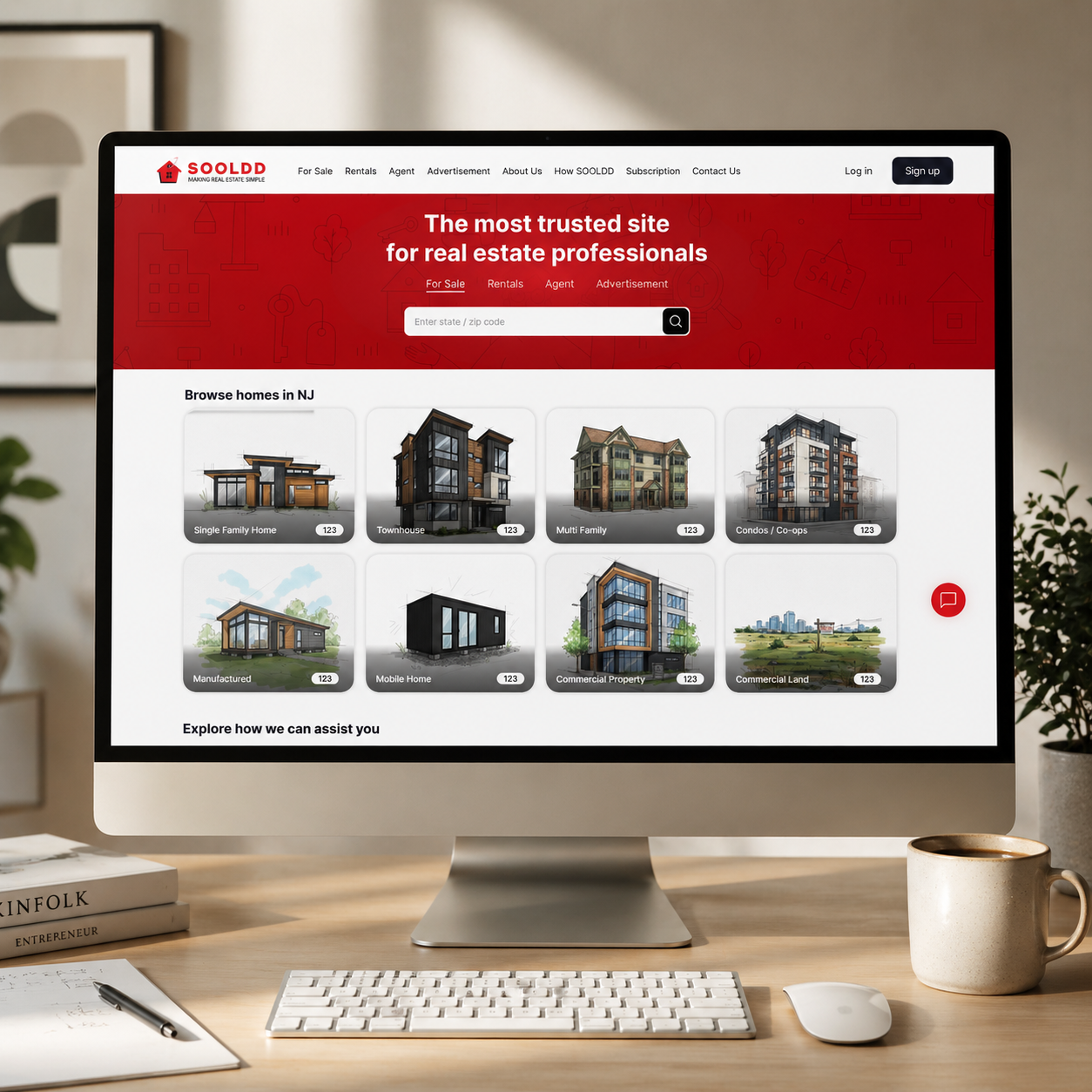

NewAgeSysIT designed and deployed a multi-agent AI chatbot for Sooldd, transforming a fully manual, 4.2-hour inquiry process into an AI-driven experience that resolves most property inquiries in under 12 seconds.

Search Journey

Desktop + mobile synced

Automation

Inquiry resolution at scale

94%

Inquiries resolved autonomously

12sec

To resolve 94% of property inquiries

38%

Increase in premium subscription revenue in 90 days

Within 90 days of deployment, Sooldd recorded a 38% increase in premium subscription revenue, a 50% relative improvement in trial-to-paid conversion, and a 40% growth in registered users.

As a digital real estate platform enabling property seekers to search listings, submit inquiries, and engage with agents across major metro areas in the US, Sooldd manages consumer-facing mobile and web applications for the App Store and Google Play. The core user activities include property search, agent matching, inquiry submission, and scheduling.

Prior to AI deployment, Sooldd's support infrastructure handled more than 1,200 inquiries per week, requiring 22 hours of dedicated staff time to manage inquiry volume alone. The average response time per inquiry stood at 4.2 hours, placing significant strain on operational capacity as user demand continued to grow.

The most critical point in the user journey was the agent-matching process, which typically took 2-3 business days under a manual model. As user volume increased, this operational model became a challenge for platform growth, with response speed and agent-matching delays directly suppressing user retention and conversion rates, signaling the need for an automated solution.

The AI chatbot transformed how our users interact with the platform. We went from users abandoning searches out of frustration to completing property inquiries in under a minute — that directly impacted our subscription numbers.

— Sooldd Founder

Sooldd's property inquiry process was highly manual, with support staff handling more than 1,200 inquiries by hand each week, averaging 4.2 hours per inquiry. Staff used to commit 22 hours every week to manage inquiries alone. As the user volumes grew, the model posed a major challenge. The team couldn't respond faster without increasing headcount, and the slow response times directly led users to abandon the platform mid-search.

Matching a property seeker to a relevant agent demanded 2-3 business days for a manual process. The delay caused by the manual process directly suppressed Sooldd's trial-to-paid conversion rate, which stayed at 18% before AI deployment. Users who couldn't get a quick agent match during the trial period didn't convert to paid subscribers. Also, the matching process relied on staff to manually review the inquiry intent and location, without a system for real-time routing.

The friction in SOOLDD's inquiry and search experience was reflected in the platform's App Store rating, which settled at 3.7 stars. Staying below the 4.0+ threshold had impacted that organic discovery across App Store and Google Play Store. Also, 30-day retention was 54%, meaning about half of new users were disengaged within the first month. The primary complaint driving both the 3.7-star rating and the 54% retention rate was slow, inconsistent inquiry responses - a platform experience failure directly suppressing organic App Store discovery and accelerating user churn before the first month of engagement was complete.

Sooldd had a substantial and growing database of market intelligence, property listings, and agent profiles; users had no conversational way to query it. Each question demanded a human intermediary. There was no system to dynamically retrieve property information from natural language inputs, and no structured approach to handle a wide range of inquiry types, such as pricing, availability, location, agent compatibility, and scheduling. Without a system to retrieve property information from natural-language inputs, Sooldd's data asset generated no conversion value, as every inquiry still required a human intermediary, capping the platform's ability to scale without adding headcount.

A multi-agent AI chatbot built on LangGraph orchestration resolved 94% of Sooldd's property inquiries autonomously - cutting response time from 4.2 hours to under 12 seconds and eliminating 18 hours of weekly manual support work.

Real-time agent matching with natural-language intent analysis reduced Sooldd's matching time from 2-3 business days to less than 45 minutes, directly leading to a 50% relative improvement in trial-to-paid conversion within 90 days.

Custom RAG pipelines built with hybrid vector and keyword retrieval and deployed with pgvector achieved 94.3% response accuracy and a sub-2% hallucination rate in production. Performance validation was made using LangSmith observability before and after the app launch.

AI chatbot deployment at scale doesn't need to replace the current database infrastructure: NewAgeSysIT integrated pgvector with Sooldd's existing MySQL database, ensuring semantic property search without a complete data migration.

For consumer platforms, AI chatbot quality directly impacts App Store ratings: Sooldd's rating improved from 3.7 to 4.6 stars after the AI launch, indicating a 0.9-point improvement driven entirely by resolving response-speed complaints that dominated pre-AI reviews.

If you're building a real estate platform, or any product where users need instant, accurate answers from a complex database, NewAgeSysIT has delivered this at production scale. The Sooldd AI chatbot now resolves 94% of inquiries autonomously.

Get expert guidance before you invest in AI software development. Work directly with Giovanni and Bibin to validate your technology direction, align AI with business goals, and make confident decisions that reduce risk and accelerate outcomes.

Request a Strategic Consultation

NewAgeSysIT built the Sooldd AI chatbot on a multi-agent architecture, leveraging LangChain LangGraph for agentic workflow orchestration. Instead of a single-prompt chatbot giving a generic response, the system routes every query to a dedicated agent: a retrieval agent for property knowledge, an SQL agent for processing structured database queries, a scheduling agent for scheduling appointments, and an escalation agent for managing edge cases that require a human handoff. This architecture lets the system handle the full complexity of real estate inquiry types rather than degrading to a one-size-fits-all response model.

A schema-aware natural language property search chatbot (SQL agent) was deployed to let users query Sooldd's MySQL property database with plain English — 'Show me 3-bedroom apartments under $2,500 in Austin with parking', and get structured and accurate real-time results. The agent uses schema grounding and query validation layers to avoid insecure execution, and structured output enforcement to enable consistent integration with the Sooldd frontend. It replaced a process that initially needed staff to manually perform database lookups on users' behalf.

A custom RAG implementation for property search and policy knowledge was built to ensure that the chatbot's responses are grounded in Sooldd's property listings, market guides, agent profiles, and platform policies. The retrieval system leverages pgvector (a PostgreSQL extension running on Sooldd's existing MySQL database) and hybrid search, combining vector similarity and keyword matching with metadata filtering for property type and location. The architecture achieved a 94.3% response accuracy rate for domain-specific QA testing before go-live and a sub-2% hallucination rate in production, as measured through LangSmith observability.

The platform includes a real-time intent- and location-matching module that learns the user's inquiry context—property type, metro location, budget range, and timeline—and matches it to a compatible agent from Sooldd's network within 45 minutes. This replaced the manual 2-3 business-day matching process and directly improved trial-to-paid conversion from 18% to 27%; a 50% relative improvement achieved within 90 days by enabling users to receive a qualified agent recommendation during their active session rather than waiting and disengaging.

The system was deployed on Amazon Bedrock as a cloud-hosted real estate AI assistant, using a cloud-native microservices architecture on AWS. The entire platform, including agent matching, chatbot, and scheduling modules, was delivered to the App Store and Google Play in 14 weeks, from discovery to launch.

The Sooldd AI chatbot now resolves 94% of inquiries autonomously.

Average Session

15 min

New Visitors

+128%

Discover your project budget with our interactive AI-powered app cost calculator.

| Layer | Technology |

|---|---|

| Orchestration Framework | LangChain · LangGraph (agentic workflow orchestration) |

| Agent Architecture | Multi-agent system · SQL Agent pipeline · Custom tool-routing · Escalation agent |

| LLM Deployment | Amazon Bedrock (hosted LLM) |

| Mobile Frontend | React Native (iOS & Android — App Store & Google Play) |

| Backend | NestJS |

| Primary Database | MySQL (Sooldd property and listings database) |

| Vector Database | pgvector — PostgreSQL extension deployed alongside MySQL |

| Retrieval Strategy | Hybrid search (vector + keyword) · Metadata filtering · Reranking |

| Output Validation | JSON schema validation · Structured output enforcement |

| Observability & Monitoring | LangSmith (AI trace observability) · AWS CloudWatch (infrastructure metrics) |

| Cloud Infrastructure | Amazon Web Services (AWS) — cloud-native microservices |

| Security | Guardrails & validation layers · Secure API integration · Access control |

| Phase | Duration | Key Output |

|---|---|---|

| Discovery & Scoping | Weeks 1–2 | Requirement mapping + data readiness assessment — MySQL schema audit, RAG knowledge base scoping, API inventory |

| Architecture Design | Week 3 | Multi-agent architecture confirmed · Tech stack finalized · LangGraph workflow designed |

| Sprint 1 — SQL Agent | Weeks 4–6 | SQL agent build — schema grounding, query validation, NestJS backend integration |

| Sprint 2 — RAG Pipeline | Weeks 7–9 | RAG pipeline — pgvector setup, hybrid retrieval, knowledge base ingestion, accuracy tuning |

| Sprint 3 — Orchestration & UI | Weeks 10–12 | Agent orchestration layer, scheduling module, real-time agent matching, React Native chatbot UI |

| UAT & Pre-launch Testing | Weeks 13–14 | Domain-specific QA — 94.3% accuracy confirmed · Hallucination rate validated at <2% · Load testing |

| Go-Live | Week 14 | App Store & Google Play deployment · LangSmith + CloudWatch monitoring live · Total: 14 weeks discovery to go-live |

NewAgeSysIT's two-week discovery phase starts with a detailed MySQL schema audit, RAG knowledge base scoping, and API inventory, establishing a data-centric foundation before the development begins. This upfront investment in data readiness prevented the scope changes mid-build and was a core factor in delivering the entire platform within the 14-week timeline.

| Role | Responsibilities |

|---|---|

| 1 × Project Lead | Delivery management, stakeholder communication, and sprint planning |

| 2 × AI / ML Engineers | LangGraph architecture, SQL agent, RAG pipeline, LangSmith observability |

| 2 × Full-Stack Developers | Backend API (NestJS), chatbot UI, mobile deployment (iOS & Android) |

| 1 × QA Engineer | Domain-specific test set creation, accuracy validation, and UAT sign-off |

The Sooldd AI chatbot resolved 94% of property inquiries without human escalation - up from 0% before deployment - handling 1,200+ inquiries per week autonomously.

| Metric | Before Deployment | After Deployment | Change |

|---|---|---|---|

| Response Time | 4.2 hours (avg) | Under 12 seconds | 99.9% reduction |

| Manual Staff Overhead | 22 hrs/week | 4 hrs/week (human escalations only) | 18 hrs per week reclaimed (staff redeployed to higher-value tasks) |

| Agent-Matching Time | 2–3 business days (manual review) | Under 45 minutes (real-time intent + location matching) | In-session conversion: users receive an agent match before leaving the platform |

| Inquiries Automated | 0 (fully manual) | 1,200+ (automated) | 94% autonomous |

| Metric | Before Deployment | After Deployment | Change |

|---|---|---|---|

| Support Staffing Cost | Pre-AI baseline | 62% reduction in platform support staffing spend | direct cost savings from automated inquiry resolution post AI deployment |

| Trial-to-Paid Conversion | 18% | 27% trial-to-paid rate | +50% relative improvement achieved within 90 days of deployment |

| Premium Subscription Revenue | Pre-deployment baseline | +38% increase | Achieved in the first 90 days post-deployment |

| 30-Day User Retention | 54% | 71% 30-day retention | +17 percentage point improvement — driven by faster responses and reduced search friction |

| Metric | Before Deployment | After Deployment | Change |

|---|---|---|---|

| App Store Rating | 3.7 stars (pre-AI) | 4.6 stars (post-AI launch) | +0.9 star improvement, primary complaint (slow responses) resolved by AI |

| Response Accuracy | N/A (manual, no systematic measurement) | 94.3% accuracy on domain-specific QA test set | Validated pre-launch + measured in production via LangSmith observability |

| Hallucination Rate | N/A (manual process) | <2% in production | Measured via LangSmith AI trace observability — below enterprise benchmark |

| Chatbot Adoption | N/A | 73% of active users | Within first 30 days |

| Metric | Before | After | Change |

|---|---|---|---|

| Registered Users | Pre-launch baseline | +40% increase in registered users | In the 90 days following the AI chatbot launch |

We grow strong with a 100% in-house team, 30+ years of industry expertise, and proven results. From concept to launch, we deliver innovation with precision and reliability.

Your idea is 100% protected by our non-disclosure agreement

Guaranteed expert consultation within 1 hour

Call directly: 1-609-919-9816

Get a free project estimate in under 60 minutes.